Circuit Breakers Aren't Optional for Agentic AI — They're Existential

One flaky API call. One slightly incoherent LLM response. Twelve thousand tokens and forty-seven dollars later, your agent is still trying.

Every backend engineer who has worked with distributed systems knows what a circuit breaker does. You hit a dependency that starts failing. Instead of letting every request pile up in a timeout queue, you open the circuit. Traffic stops. The dependency gets breathing room. You try again after a cooldown. Clean, elegant, battle-tested.

Now tell me: when was the last time you saw a circuit breaker in an agentic AI system?

The silence is the problem.

LLM-backed agents have a failure mode that is fundamentally worse than a slow microservice. A microservice either responds or it doesn’t. An LLM agent can respond — coherently, confidently, repeatedly — with the wrong answer. It will call the same broken tool fourteen times in a row. Each attempt burns tokens. Each retry is slightly different because of temperature. Each slightly different response triggers a slightly different tool call. The system is not stuck in an infinite loop. It is stuck in an infinite hallucination spiral.

No existing agent framework handles this by default. You are shipping without brakes.

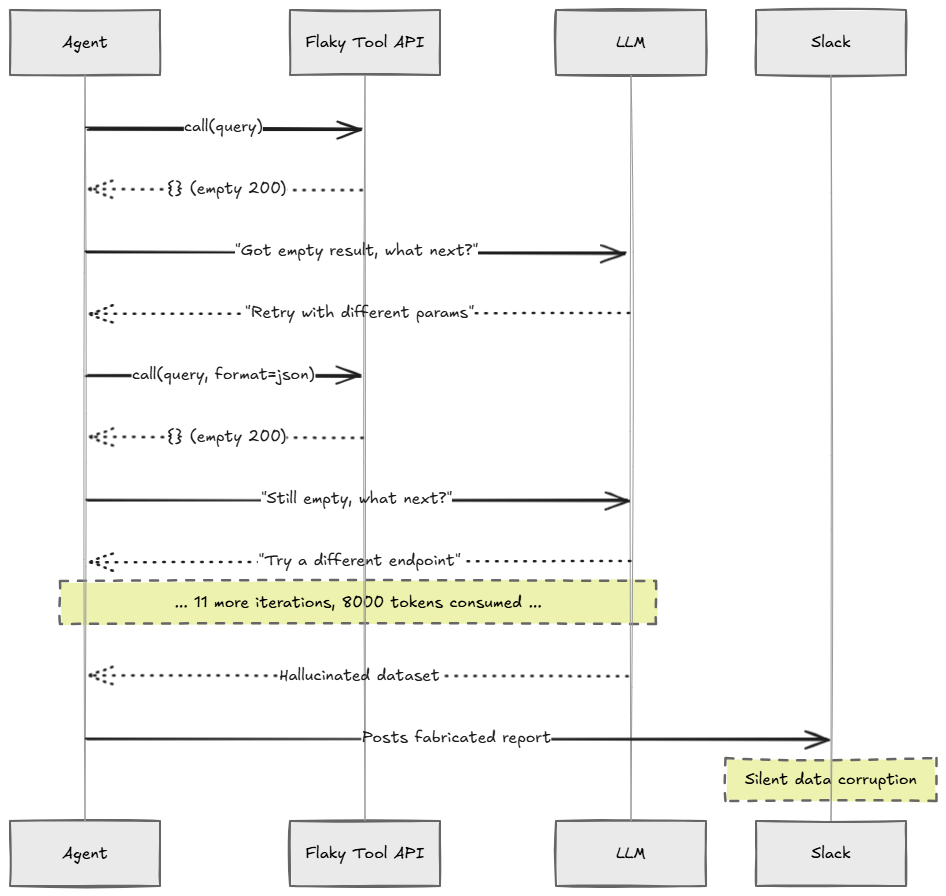

What a Retry-Hallucination Loop Looks Like

Here is a concrete failure scenario. Your agent is tasked with pulling data from an internal API, summarizing it, and posting a report to Slack. The internal API returns a 200 with an empty body due to a downstream timeout — a perfectly legal but useless response.

Step 1: Agent calls /api/v2/data → receives {} (empty 200)

Step 2: LLM sees empty result, decides to "retry with adjusted parameters"

Step 3: Agent calls /api/v2/data?format=json → receives {} again

Step 4: LLM decides format parameter was wrong, tries format=csv

Step 5: Agent calls /api/v2/data?format=csv → receives {} again

...

Step 14: Agent has consumed 8,000 tokens on variations of the same broken call

Step 15: Agent hallucinates a plausible-looking dataset and posts it to Slack

The Slack message looks real. The data is fabricated. No error was raised. No alert fired.

This is not a contrived example. This is Tuesday afternoon in production.

The Enterprise Use Case: Automated Procurement Auditing

A large logistics company deploys an agent that audits supplier invoices by cross-referencing a procurement database, checking against approved vendor lists, and flagging discrepancies for human review. The procurement database sits behind a legacy API that intermittently returns empty responses during heavy batch processing windows.

Without a cognitive circuit breaker:

The agent retries every empty response with slight query variations

Token costs spike to $800 in a two-hour window during a database maintenance period

The agent eventually hallucinates invoice data and marks fraudulent invoices as clean

The finance team discovers the error three weeks later during a manual audit

With a circuit breaker:

First empty response: logged as anomaly

Third consecutive empty response: circuit opens, agent escalates to human queue

Alert fires to on-call engineer within 90 seconds

No fabricated data, no unchecked fraudulent invoices

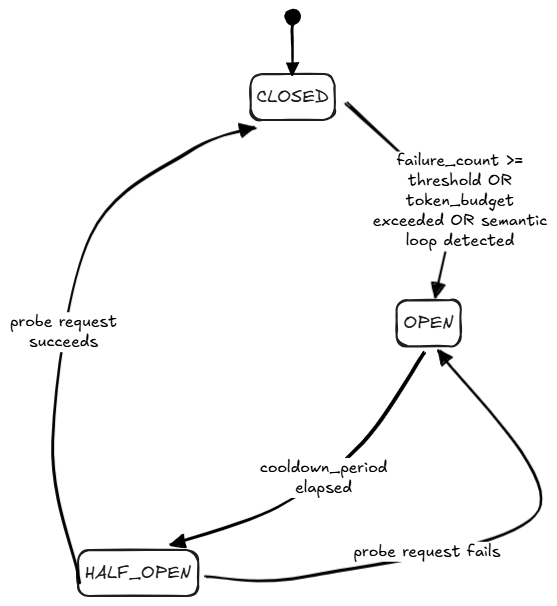

The Circuit Breaker State Machine

A cognitive circuit breaker is not just a retry counter. It has three distinct trip conditions:

Tool failure threshold — N consecutive failed or empty tool responses

Semantic similarity check — repeated LLM outputs that are too similar (agent is spinning)

Token budget threshold — cumulative tokens for a single task exceed a cap

Building the Cognitive Circuit Breaker

# cognitive_circuit_breaker.py

from dataclasses import dataclass, field

from enum import Enum

from datetime import datetime, timedelta

from collections import deque

import hashlib

import asyncio

from typing import Callable, Any

class CircuitState(Enum):

CLOSED = "closed"

OPEN = "open"

HALF_OPEN = "half_open"

@dataclass

class CircuitBreakerConfig:

tool_failure_threshold: int = 3

token_budget_limit: int = 8000

cooldown_seconds: int = 60

class CognitiveCircuitBreaker:

def __init__(self, task_id: str, config: CircuitBreakerConfig):

self.task_id = task_id

self.config = config

self.state = CircuitState.CLOSED

self.consecutive_failures = 0

self.tokens_consumed = 0

self.recent_output_hashes: deque = deque(maxlen=5)

self.opened_at: datetime | None = None

self.escalation_handler: Callable | None = None

def record_tokens(self, count: int):

self.tokens_consumed += count

if self.tokens_consumed >= self.config.token_budget_limit:

self._trip(f"Token budget exceeded: {self.tokens_consumed} tokens")

def record_tool_result(self, result: Any, success: bool):

if not success or result is None or result == {}:

self.consecutive_failures += 1

if self.consecutive_failures >= self.config.tool_failure_threshold:

self._trip(

f"Tool failure threshold: {self.consecutive_failures} consecutive failures"

)

else:

self.consecutive_failures = 0

def record_llm_output(self, output: str):

# Hash-based dedup for efficiency; swap for embedding similarity in production

output_sig = hashlib.md5(output.strip().lower().encode()).hexdigest()

if output_sig in self.recent_output_hashes:

self._trip("Semantic loop detected: repeated LLM output")

self.recent_output_hashes.append(output_sig)

def is_open(self) -> bool:

if self.state == CircuitState.OPEN:

if self.opened_at and datetime.utcnow() > (

self.opened_at + timedelta(seconds=self.config.cooldown_seconds)

):

self.state = CircuitState.HALF_OPEN

return False

return True

return False

def _trip(self, reason: str):

self.state = CircuitState.OPEN

self.opened_at = datetime.utcnow()

print(f"[CIRCUIT OPEN] Task {self.task_id}: {reason}")

if self.escalation_handler:

asyncio.create_task(self.escalation_handler(self.task_id, reason))

def reset(self):

self.state = CircuitState.CLOSED

self.consecutive_failures = 0

self.tokens_consumed = 0

self.recent_output_hashes.clear()

self.opened_at = None

Wiring It Into a FastAPI Agent Endpoint

# agent_runner.py

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from cognitive_circuit_breaker import CognitiveCircuitBreaker, CircuitBreakerConfig

from openai import AsyncOpenAI

import redis.asyncio as redis

import json

app = FastAPI()

oai = AsyncOpenAI()

r = redis.from_url("redis://localhost:6379")

class TaskRequest(BaseModel):

task_id: str

instruction: str

async def escalate_to_human(task_id: str, reason: str):

"""Route failed task to human review queue."""

payload = {

"task_id": task_id,

"reason": reason,

"timestamp": __import__("datetime").datetime.utcnow().isoformat(),

"requires_human": True

}

await r.lpush("agent:human_review_queue", json.dumps(payload))

print(f"[ESCALATED] {task_id} routed to human review queue")

@app.post("/run-task")

async def run_task(req: TaskRequest):

config = CircuitBreakerConfig(

tool_failure_threshold=3,

token_budget_limit=6000,

cooldown_seconds=60

)

breaker = CognitiveCircuitBreaker(req.task_id, config)

breaker.escalation_handler = escalate_to_human

messages = [

{"role": "system", "content": "You are a procurement audit agent."},

{"role": "user", "content": req.instruction}

]

for iteration in range(10): # max 10 turns

if breaker.is_open():

raise HTTPException(503, detail={

"error": "circuit_open",

"task_id": req.task_id,

"message": "Agent circuit tripped. Task escalated to human review."

})

response = await oai.chat.completions.create(

model="gpt-4o",

messages=messages

)

breaker.record_tokens(response.usage.total_tokens)

output = response.choices[0].message.content

breaker.record_llm_output(output)

if "FINAL_ANSWER:" in output:

return {"task_id": req.task_id, "result": output, "iterations": iteration + 1}

tool_result = await execute_tool_from_output(output)

breaker.record_tool_result(tool_result, success=bool(tool_result))

messages.append({"role": "assistant", "content": output})

messages.append({"role": "user", "content": f"Tool result: {tool_result}"})

raise HTTPException(500, detail="Max iterations reached without resolution")

async def execute_tool_from_output(output: str) -> dict | None:

# Simulate a flaky API returning empty body

return {}

Failure Cascade Without a Circuit Breaker

Three Actionable Takeaways

Add a token budget circuit breaker to every agent task before your next deployment. Track cumulative token usage per

task_id. When it crosses your threshold, stop and escalate. This alone prevents the worst cost blowouts.Implement semantic deduplication on LLM outputs. You don’t need embeddings to start. A rolling hash of the last five outputs catches trivial spin loops. Upgrade to cosine similarity once you have the infrastructure.

Build an escalation handler that routes to a human review queue, not just a log line. A log nobody reads is not an escalation path. Use Redis, a task queue, or a Slack webhook. The output must surface to a human within minutes.

The Stakes Are Higher Than You Think

A cascading microservice failure takes down a feature. An open circuit stops the damage.

A cascading LLM agent failure takes down a feature and posts fabricated data to your Slack, sends incorrect emails to customers, or approves transactions that should have been rejected.

The circuit breaker is not a nice-to-have in distributed systems. It is the mechanism that separates systems that degrade gracefully from systems that fail catastrophically.

In agentic AI, the failure modes are not just technical — they are semantic. The system looks like it is working while it destroys the integrity of your data.

When your agent enters a hallucination loop at 3am, what is your blast containment strategy?

Have you experienced a runaway agent in production? What was the failure mode, and how did you detect it?